Auto Racing Test Drives Its Own EV Future

The idea of

sensible roads is not new. It features initiatives like targeted traffic lights that immediately adjust their timing dependent on sensor facts and streetlights that immediately regulate their brightness to decrease strength consumption. PerceptIn, of which coauthor Liu is founder and CEO, has shown at its possess check observe, in Beijing, that streetlight management can make traffic 40 p.c extra effective. (Liu and coauthor Gaudiot, Liu’s former doctoral advisor at the University of California, Irvine, normally collaborate on autonomous driving assignments.)

But these are piecemeal adjustments. We propose a substantially a lot more formidable solution that brings together intelligent roads and smart vehicles into an built-in, thoroughly intelligent transportation procedure. The sheer amount of money and precision of the mixed details will permit these a technique to access unparalleled concentrations of safety and efficiency.

Human drivers have a

crash amount of 4.2 incidents for each million miles autonomous cars and trucks will have to do significantly much better to acquire acceptance. On the other hand, there are corner instances, these as blind places, that afflict both of those human drivers and autonomous vehicles, and there is now no way to deal with them without the help of an clever infrastructure.

Placing a good deal of the intelligence into the infrastructure will also lessen the price of autonomous automobiles. A totally self-driving car or truck is nevertheless pretty pricey to construct. But little by little, as the infrastructure gets to be more highly effective, it will be achievable to transfer extra of the computational workload from the motor vehicles to the streets. Ultimately, autonomous autos will require to be geared up with only primary perception and manage abilities. We estimate that this transfer will reduce the value of autonomous vehicles by a lot more than 50 %.

Here’s how it could work: It’s Beijing on a Sunday early morning, and sandstorms have turned the solar blue and the sky yellow. You’re driving through the metropolis, but neither you nor any other driver on the highway has a very clear viewpoint. But each individual automobile, as it moves together, discerns a piece of the puzzle. That info, mixed with info from sensors embedded in or in the vicinity of the street and from relays from climate providers, feeds into a distributed computing method that works by using synthetic intelligence to build a one model of the ecosystem that can figure out static objects alongside the road as properly as objects that are moving alongside every single car’s projected route.

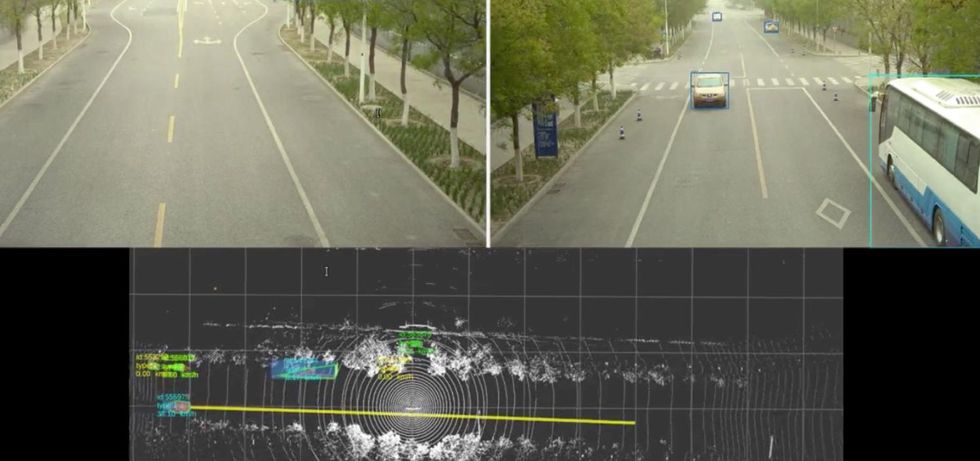

The self-driving automobile, coordinating with the roadside technique, sees ideal through a sandstorm swirling in Beijing to discern a static bus and a relocating sedan [top]. The procedure even suggests its predicted trajectory for the detected sedan through a yellow line [bottom], successfully forming a semantic substantial-definition map.Shaoshan Liu

The self-driving automobile, coordinating with the roadside technique, sees ideal through a sandstorm swirling in Beijing to discern a static bus and a relocating sedan [top]. The procedure even suggests its predicted trajectory for the detected sedan through a yellow line [bottom], successfully forming a semantic substantial-definition map.Shaoshan Liu

Properly expanded, this approach can avert most mishaps and website traffic jams, troubles that have plagued street transportation due to the fact the introduction of the auto. It can deliver the ambitions of a self-adequate autonomous vehicle with no demanding a lot more than any just one car or truck can deliver. Even in a Beijing sandstorm, each individual human being in each individual auto will arrive at their vacation spot securely and on time.

By putting together idle compute electrical power and the archive of sensory knowledge, we have been able to boost effectiveness with out imposing any more burdens on the cloud.

To date, we have deployed a product of this procedure in a number of towns in China as very well as on our exam monitor in Beijing. For occasion, in Suzhou, a city of 11 million west of Shanghai, the deployment is on a general public road with three lanes on every single facet, with period one of the challenge covering 15 kilometers of highway. A roadside system is deployed each 150 meters on the road, and every roadside program is made up of a compute unit geared up with an

Intel CPU and an Nvidia 1080Ti GPU, a collection of sensors (lidars, cameras, radars), and a conversation element (a roadside device, or RSU). This is simply because lidar supplies far more accurate perception in contrast to cameras, in particular at evening. The RSUs then converse directly with the deployed cars to aid the fusion of the roadside info and the vehicle-side details on the auto.

Sensors and relays together the roadside comprise a person fifty percent of the cooperative autonomous driving system, with the hardware on the motor vehicles them selves creating up the other 50 %. In a standard deployment, our product employs 20 autos. Each auto bears a computing program, a suite of sensors, an engine manage device (Eu), and to hook up these factors, a controller space community (CAN) bus. The road infrastructure, as explained previously mentioned, is composed of equivalent but a lot more highly developed tools. The roadside system’s superior-conclusion Nvidia GPU communicates wirelessly through its RSU, whose counterpart on the car or truck is known as the onboard unit (OBU). This back-and-forth conversation facilitates the fusion of roadside facts and vehicle information.

This deployment, at a campus in Beijing, is made up of a lidar, two radars, two cameras, a roadside communication unit, and a roadside personal computer. It handles blind spots at corners and tracks shifting obstructions, like pedestrians and vehicles, for the benefit of the autonomous shuttle that serves the campus.Shaoshan Liu

This deployment, at a campus in Beijing, is made up of a lidar, two radars, two cameras, a roadside communication unit, and a roadside personal computer. It handles blind spots at corners and tracks shifting obstructions, like pedestrians and vehicles, for the benefit of the autonomous shuttle that serves the campus.Shaoshan Liu

The infrastructure collects facts on the local natural environment and shares it instantly with automobiles, therefore eliminating blind spots and or else extending notion in apparent means. The infrastructure also procedures data from its very own sensors and from sensors on the vehicles to extract the which means, developing what’s named semantic data. Semantic information may possibly, for instance, detect an item as a pedestrian and track down that pedestrian on a map. The results are then sent to the cloud, the place much more elaborate processing fuses that semantic information with details from other sources to produce global notion and arranging information. The cloud then dispatches global traffic info, navigation programs, and handle commands to the cars and trucks.

Every car at our check keep track of commences in self-driving mode—that is, a stage of autonomy that today’s most effective programs can control. Just about every automobile is geared up with 6 millimeter-wave radars for detecting and monitoring objects, eight cameras for two-dimensional notion, 1 lidar for three-dimensional notion, and GPS and inertial guidance to find the motor vehicle on a electronic map. The 2D- and 3D-perception benefits, as properly as the radar outputs, are fused to create a detailed watch of the road and its quick environment.

Future, these perception benefits are fed into a module that retains monitor of each individual detected object—say, a motor vehicle, a bicycle, or a rolling tire—drawing a trajectory that can be fed to the up coming module, which predicts exactly where the goal object will go. Last but not least, these predictions are handed off to the setting up and management modules, which steer the autonomous auto. The automobile makes a product of its ecosystem up to 70 meters out. All of this computation takes place inside the vehicle alone.

In the meantime, the smart infrastructure is carrying out the exact position of detection and tracking with radars, as very well as 2D modeling with cameras and 3D modeling with lidar, finally fusing that knowledge into a model of its individual, to enhance what every vehicle is accomplishing. Due to the fact the infrastructure is spread out, it can model the environment as significantly out as 250 meters. The tracking and prediction modules on the cars will then merge the broader and the narrower products into a complete perspective.

The car’s onboard device communicates with its roadside counterpart to facilitate the fusion of details in the motor vehicle. The

wi-fi regular, termed Cellular-V2X (for “vehicle-to-X”), is not contrary to that employed in phones conversation can arrive at as much as 300 meters, and the latency—the time it takes for a message to get through—is about 25 milliseconds. This is the place at which quite a few of the car’s blind spots are now protected by the system on the infrastructure.

Two modes of communication are supported: LTE-V2X, a variant of the cellular typical reserved for car or truck-to-infrastructure exchanges, and the commercial mobile networks employing the LTE regular and the 5G conventional. LTE-V2X is dedicated to direct communications concerning the highway and the autos over a array of 300 meters. Although the communication latency is just 25 ms, it is paired with a reduced bandwidth, presently about 100 kilobytes per second.

In distinction, the professional 4G and 5G community have endless range and a drastically better bandwidth (100 megabytes for each next for downlink and 50 MB/s uplink for commercial LTE). Having said that, they have much higher latency, and that poses a important problem for the minute-to-instant conclusion-building in autonomous driving.

A roadside deployment at a community street in Suzhou is organized alongside a eco-friendly pole bearing a lidar, two cameras, a conversation device, and a laptop or computer. It considerably extends the variety and protection for the autonomous vehicles on the street.Shaoshan Liu

A roadside deployment at a community street in Suzhou is organized alongside a eco-friendly pole bearing a lidar, two cameras, a conversation device, and a laptop or computer. It considerably extends the variety and protection for the autonomous vehicles on the street.Shaoshan Liu

Note that when a vehicle travels at a pace of 50 kilometers (31 miles) for each hour, the vehicle’s halting distance will be 35 meters when the road is dry and 41 meters when it is slick. For that reason, the 250-meter perception range that the infrastructure allows supplies the auto with a big margin of safety. On our take a look at keep track of, the disengagement rate—the frequency with which the security driver must override the automated driving system—is at least 90 % decreased when the infrastructure’s intelligence is turned on, so that it can increase the autonomous car’s onboard process.

Experiments on our exam monitor have taught us two items. 1st, due to the fact targeted traffic conditions alter during the working day, the infrastructure’s computing units are completely in harness during hurry several hours but mostly idle in off-peak hrs. This is additional a attribute than a bug simply because it frees up much of the great roadside computing electrical power for other duties, these as optimizing the program. Second, we come across that we can certainly improve the process mainly because our growing trove of community notion facts can be applied to good-tune our deep-understanding types to sharpen perception. By placing jointly idle compute energy and the archive of sensory facts, we have been ready to strengthen efficiency with out imposing any additional burdens on the cloud.

It’s challenging to get persons to agree to assemble a extensive system whose promised gains will come only immediately after it has been concluded. To address this hen-and-egg dilemma, we need to proceed by means of a few consecutive phases:

Stage 1: infrastructure-augmented autonomous driving, in which the automobiles fuse automobile-aspect notion data with roadside perception data to boost the basic safety of autonomous driving. Vehicles will still be seriously loaded with self-driving tools.

Stage 2: infrastructure-guided autonomous driving, in which the vehicles can offload all the notion duties to the infrastructure to lower for every-car or truck deployment expenses. For protection causes, standard perception capabilities will continue being on the autonomous automobiles in situation conversation with the infrastructure goes down or the infrastructure itself fails. Motor vehicles will have to have notably significantly less sensing and processing hardware than in phase 1.

Stage 3: infrastructure-planned autonomous driving, in which the infrastructure is charged with each perception and scheduling, as a result acquiring optimum basic safety, targeted traffic efficiency, and expense cost savings. In this stage, the vehicles are outfitted with only quite primary sensing and computing abilities.

Complex troubles do exist. The first is network stability. At substantial auto speed, the system of fusing automobile-aspect and infrastructure-facet information is very sensitive to network jitters. Utilizing professional 4G and 5G networks, we have noticed

community jitters ranging from 3 to 100 ms, more than enough to properly avoid the infrastructure from assisting the vehicle. Even extra vital is security: We have to have to make certain that a hacker can’t attack the conversation network or even the infrastructure by itself to move incorrect facts to the cars and trucks, with potentially deadly penalties.

A different issue is how to gain widespread aid for autonomous driving of any type, let by itself one particular based mostly on sensible roads. In China, 74 % of men and women surveyed favor the fast introduction of automated driving, whereas in other nations, general public support is extra hesitant. Only 33 % of Germans and 31 p.c of people today in the United States support the speedy enlargement of autonomous automobiles. Potentially the effectively-recognized car or truck lifestyle in these two nations has made people more connected to driving their possess cars.

Then there is the difficulty of jurisdictional conflicts. In the United States, for occasion, authority about streets is dispersed among the the Federal Highway Administration, which operates interstate highways, and state and neighborhood governments, which have authority more than other roadways. It is not always crystal clear which level of govt is accountable for authorizing, taking care of, and paying for upgrading the present infrastructure to good roadways. In new periods, a great deal of the transportation innovation that has taken spot in the United States has transpired at the nearby amount.

By distinction,

China has mapped out a new established of steps to bolster the exploration and enhancement of crucial systems for clever highway infrastructure. A policy document revealed by the Chinese Ministry of Transportation aims for cooperative devices in between car and highway infrastructure by 2025. The Chinese govt intends to include into new infrastructure this kind of good factors as sensing networks, communications methods, and cloud management units. Cooperation amid carmakers, large-tech companies, and telecommunications service vendors has spawned autonomous driving startups in Beijing, Shanghai, and Changsha, a town of 8 million in Hunan province.

An infrastructure-auto cooperative driving technique claims to be safer, a lot more efficient, and a lot more inexpensive than a strictly vehicle-only autonomous-driving technique. The engineering is listed here, and it is becoming executed in China. To do the same in the United States and somewhere else, policymakers and the community must embrace the solution and give up today’s design of motor vehicle-only autonomous driving. In any circumstance, we will quickly see these two vastly diverse ways to automatic driving competing in the world transportation market.

From Your Web-site Content

Connected Articles or blog posts All over the World wide web